DevOps

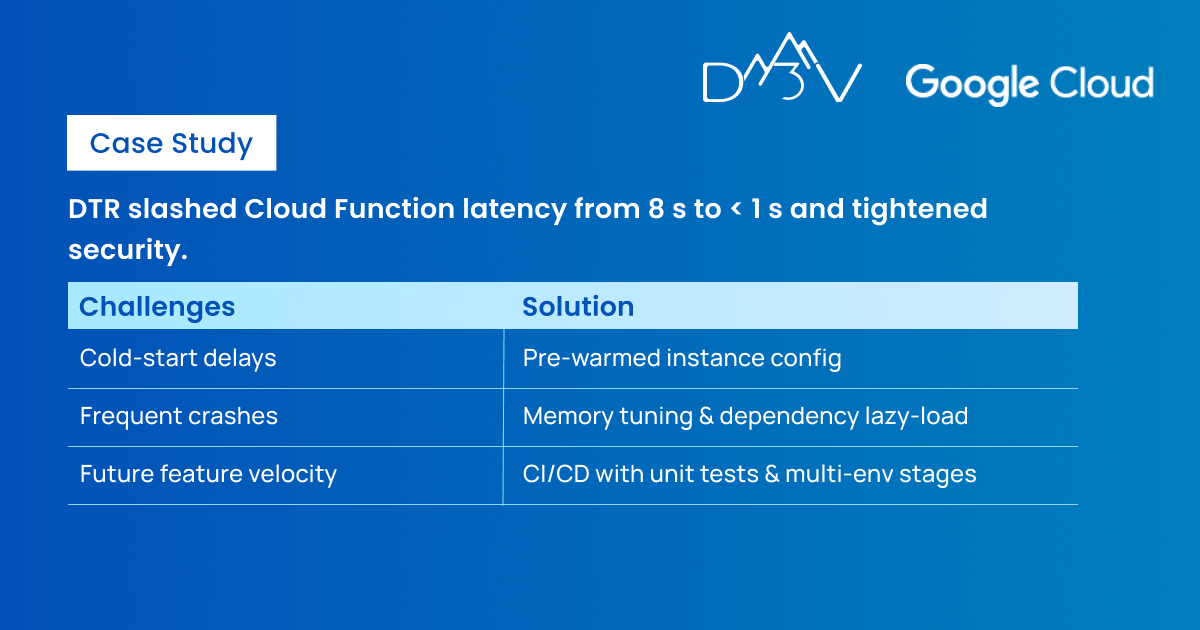

DTR Reduces App Response Time And Strengthens Security Through Google Cloud And Firebase By 70% on Google Cloud

Overview

The Down to Ride (DTR) app was launched in July 2020 by Social Active to help cyclists find and plan group rides with their friends. DTR works by connecting users in the app to friends who are looking to ride on a specific day. Users can then join any ride with their friends. DTR is free to use and makes money through corporate sponsorships and premium upgrades. The app is available on iOS and Android in the U.S.

DTR’s ultimate goal is to help users efficiently find bicycle rides that will be fun. This means that the app needs to be smooth, simplifying discovery and coordination of the IRL (In real life) activity using digital technologies and a friendly user experience. DTR infrastructure is powered by Firebase. The project leveraged most of Firebase’s product suite, including:

- Firebase Analytics

- Firebase Auth

- Firebase Hosting

- Firebase Firestore

- Firebase Cloud Functions

- Firebase Messaging

- Firebase Storage

- Firebase Dynamic Links

- Firebase Admin SDK

DTR was seeking expert Google Cloud Architects, Google Cloud DevOps Engineers, and Google Cloud Developers conversant with Firebase to successfully launch a Minimum Viable Product (MVP) of their app. They wanted to assess their existing serverless infrastructure, improve performance, and have alternatives for application deployment (avoiding vendor lock-in).

DTR aimed to future-proof the application and scale to handle a large user base in multiple geographical regions. They planned to optimize their Firestore DB design, rapidly develop new functionalities, and support existing features. DTR also needed support to handle daily Firebase deployments.

The Challenge

How do you release an MVP of your app that is robust, reliable, and secure? DTR was trying to answer this by improving their serverless architecture since cold starts created longer response times on the backend server. To achieve this goal, they’d also have to optimize their database design for faster data retrieval.

Considering the bigger picture, the application had to be highly scalable to serve users in multiple geographical locations. Another daunting challenge was how to rapidly add new functionality to the pre-existing feature set, such as better exception handling across the application. DTR had observed:

- Significant latency from the time dtrCommand is invoked until the Cloud Function gets triggered (8 seconds). Ideally, Cloud Function execution should take around 200ms.

- Frequent application crashes resulting in latency issue. Remember that every crash starts a fresh instance of Cloud Function, which increases latency within the application.

DTR hoped to have their technology supported on Google Cloud and connected vendors. They needed cloud engineers and DevOps support to perform cloud administration, provide alternative deployment options for their application (eliminating vendor lock-in), deploy daily, integrate unit testing in their daily deployments, improve their cloud security and create a disaster recovery plan.

We had several detailed chat/email conversations with D3V about the project requirements and then after our initial meeting, we were convinced D3V was the right candidate to deliver this project.

-CEO of SocialActive.

Our Solution

We responded to DTR’s challenges by:

Managing cold starts with Firebase Cloud Functions

The D3V team of certified Google Cloud architects, DevOps engineers, and Cloud developers thoroughly reviewed DTR’s underlying serverless cloud architecture and identified the root cause of their latency issues. We remedied them by addressing the problem of cold starts of Firebase Cloud Functions.

Firebase Cloud Functions scales instances based on the traffic received by each instance. When a new instance is spun up, all the dependencies of the node environment are loaded at the startup point, causing an additional delay known as a cold start. Since the app received varying traffic, many requests were affected by the cold starts.

We solved this issue by preconfiguring a set of Firebase Cloud Function instances to warm. This was to reduce latency and process any incoming requests without having the application to load before it can process the request. D3V performed an in-depth analysis of resource utilization and the nature of traffic on the DTR application. Based on the results, we chose the minimum number of instances to keep warm to avoid cold starts.

Optimizing Performance

We optimized the DTR app’s performance through many approaches. For example:

- We implemented lazy loading on dependencies to only load node packages that the request uses and improved performance, bringing latency under one second.

- Our team increased the memory allocated to Firebase Cloud Functions to optimize the backend processes related to complex database queries.

- We leveraged Google’s Cloud Trace to identify functions with higher latencies and optimized the code by reducing the number of DNS queries for Google APIs. This involved creating client objects in the global scope and updating slow database queries to improve performance.

- Our experts upgraded the Node.js versions and package dependencies to the latest versions to take advantage of the optimizations/performance improvements they offered.

- We optimized networking for Cloud Functions by using a keepalive to maintain persistent connections across function invocation, instead of creating a new connection every time a function is invoked.

- Our specialists optimized Cloud Firestore collections to be best suited for concurrent reads, writes, and pagination.

New Features

Our efforts resulted in some new features, such as:

Algolia Search Implementation

Firebase didn’t support querying substring matches, so the client implementation involved full collection/partial scans of documents to return search results. The D3V team performed Proofs of Concept (PoC) on several search library providers and implemented Algolia due to its rich set of features and indexing capabilities.

The solution involved indexing each of the collections in Firestore to the Algolia indexes and leveraging Algolia’s SDK to provide accurate search results within a few milliseconds.

Robustness and Disaster recovery

We created Backup/Restore scripts for Disaster Recovery and Setup processes, creating weekly snapshots of the database. Our team also leveraged the cost-effective Google Cloud Storage buckets to store the database snapshots.

Improved DevOps

We set up cloud environments for Production, Staging, and User Acceptance Testing (UAT) environments and started the Continuous Integration and Continuous Delivery (CI/CD) process to support daily deployments. Our experts developed unit tests using the Firestore unit testing framework and integrated them with the CI/CD process.

We followed the principle of least privilege to improve application security with service accounts and Identity and Access Management (IAM) rules. Our team also analyzed the communication between Client devices and Firebase Cloud Functions and created security policies to enable them to communicate securely.

We were able to release several features in the app rapidly because of a dedicated Firebase backend development team. The backend Firebase Infrastructure was also optimized, especially the Firebase Cloud Functions and our apps on iOS and Android run faster now.

-CEO of SocialActive.

Key Accomplishments

We are proud to have made various accomplishments under this project, including:

- Reducing application latency significantly and improving performance.

- Optimizing the serverless architecture with numerous in-depth analyses and PoC.

- Developing and integrating unit tests into the CI/CD process.

- Delivering new features and functionalities rapidly and supporting existing app functionalities.

- Suggesting future improvements and architecture design on Cloud Run to develop robust application needs.

- Provisioning Production, Staging, and UAT environments and supporting daily deployment needs.